From Monday 10th to Wednesday 12th March 2008 was the actual IEEE VR conference. See the complete program.

It was harder to take notes because there was no power plug in this room, and Wi-fi was having a hard time, so sorry if the report here is less complete. Moreover we had to hold the Virtools booth during pauses, which was pretty exhausting and caused us to miss some interesting presentations.

The first session was about Augmented/Mixed reality, and even during the other sessions, there was a lot of talk about AR! I’m wondering if this is because AR is trendy right now, but I’m pretty sure there are a lot of things to research in pure VR! Not that AR isn’t interesting, and of course there’s a lot of common ground, but it’s not my main field of interest. So maybe there should be a IEEE AR or a full AR session where all the AR specific topics are discussed ? Like markers /camera tracking, AR displays, AR applications etc.

An impressive tracking system based on visual/inertial fusion was presented by Gabriele Bleser and Didier Stricker (“Advanced tracking through efficient image processing and visual-inertial sensor fusion”). It is very robust and didn’t seem to have any visible latency, but it requires a textured-CAD model of the environment to be able to use an illumination model. Everyone in the room was stunned and I believe it’s the only time the public spontaneously applauded in the middle of the presentation!

Here are some summaries of papers.

Massively Multiplayer Online World as Platform for AR experiences

by Lang, MacIntyre, Jamard.

We have seen a prototype of augmented reality interface to Second Life; you could see SL avatars in the real world, which is really nice! Even more, an avatar inside SL can record its performance in the Augmented Reality and see that video inside SL! This mixing of real and virtual world makes me dizzy!! That’s a really nice application.

See http://arsecondlife.gvu.gatech.edu

[youtube]http://www.youtube.com/watch?v=O2i-W9ncV_0[/youtube]Providing a wide field of view for AR

by

The first paper was about improving the field of view used for desktop AR to improve usability. By putting the camera on the user’s head and mosaicing (stitching) the views, the system can provide a proprioceptive match between the real and augmented world.

Displaying the whole interaction space shows better performance and usability, and it also reduces searching time and cognitive load.

But it seems that placing the camera in a fixed location close to the user’s head. The stiching errors, mainly due to motion blur, were the main concern of users but the mosaicing system can still be a good alternative if the camera cannot be fixed at the proper place or when it will be improved

Capturing images with sparse informational pixels using projected 3d tags

by

The goal of this team is to have tags in realworld to be seen by telephone cameras.

Some challenges in barcode recognition are tag distance and inclination, environment illumination.

Moreover, it requires attaching physical tags to the surface.

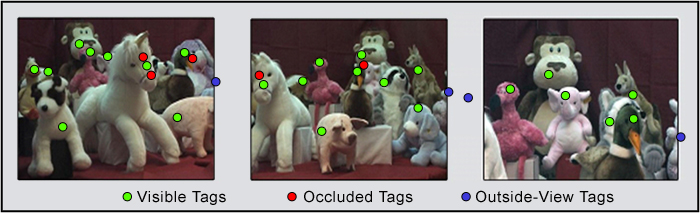

What is proposed here is to project optical tag with a projector. This allows to use, instead of a spatial pattern, have a temporal pattern, or a spatio temporal pattern. Moreover, the tags are projected in the infrared spectrum so are not visible by human eye or regular camera.

These tags allow to get information of real objects on cheap phone cameras with very limited computation power.

See http://www1.cs.columbia.edu/CAVE/projects/photo_tags/

Hear-through AR: using bone conduction to deliver spatial audio

by

See http://www.cs.wpi.edu/~gogo/hive

The goal is to augment reality of the auditory sense, not only visually as in traditionnal AR. We need CG and real world sound occlusion and reflection. Why use a bone conduction headset ? Because the real world is not occluded or modified in any way. But headphone accuracy is probably better. So a lot of work still has to be done to improve the system, both on the sound generation and the hardware, but the results of the study are very interesting.