These three great days have been very intense; holding the booth, meeting people, attending conferences, trying hardware and applications, being a jury member for the student competition. First things first, here are some pictures I took there. I should have taken more of the show..

There was not much new hardware, especially hardly any new expensive hardware. The novelty came from the use of existing hardware and better software. It seems that VR is at last being democratized; people create customized and cheap input devices, use webcams, recycle hardware not meant for VR etc.

Read on for more..

Emotional Immersion

– Verdun (C) David Ademas –

First I want to talk about the “Verdun – 1916” application, which was absolutely fantastic. It was done by students from two schools, ESCIN and ESIEA.

You’re lying on a modified dentist chair, tied with several rumbling devices on you, a headphone and an eMagin Z800 HMD. As the installation is behind a curtain, the small room is very dark; moreover the headphone cuts any undesirable sound. You are totally cut from the real world.

And the experience begins.

My heart began beating hard the moment I was installed. I didn’t know what would happen to me, I was totally helpless.

When my avatar opens his eyes, I realize I’m on a battlefield, in 1916 exactly, during World War 1. Bombs are exploding all around me, their shock wave reach me. I can only turn my head; I look around, see fighters running towards the enemy while bombs are exploding around us. I’d like to move, run as they do, wherever they’re going. But when I look at my legs, I understand why I can’t. I’m badly injured, I can only rest here and wait for death.

A bomb explodes near me, the end is near. My heart beats faster, I’m afraid.

Then I feel that I’m being dragged. Somebody’s trying to save me, maybe I’ll live ! I see dead bodies all around me. I look at my savior, at the dark sky. I can still feel the bombs. We’re in hell.. We reach the trenches. Fighters are still running. My savior is looking at me. (A girl told me that at this point she smiled at him).

A big explosion. A flash. My heart stops beating. We’re all dead.

Students untie me. Back to reality.

I’ve never been immersed in such a way. I’ve never been emotionally immersed, and that’s really an incredible experience. That’s exactly the kind of immersion I was waiting for in a virtual world. There was no interactivity apart from the head movement, but maybe that’s the reason why it worked. VR Interactivity is still very young and I think it would have broken the magic.

This application won two awards, and they truly deserve it.

Installations

There was a lot of talks and some installations about medical uses of VR. Experience a disabled day with a wheelchair simulator [ video, in french ], addictions therapy. Invited speaker Ken Graap, CEO of Virtually Better, gave an interesting talk about their work on exposure therapy. The goal is to create a virtual environment where patients will want to smoke, drink, or use drugs, then help them overcome their craving.

There were at least two collaborative touch screens, and it’s nice to see that it can be done with very cheap hardware, like the Anoto pens and paper, or just a webcam.

– Jacob Leitner and his Shared Design Space –

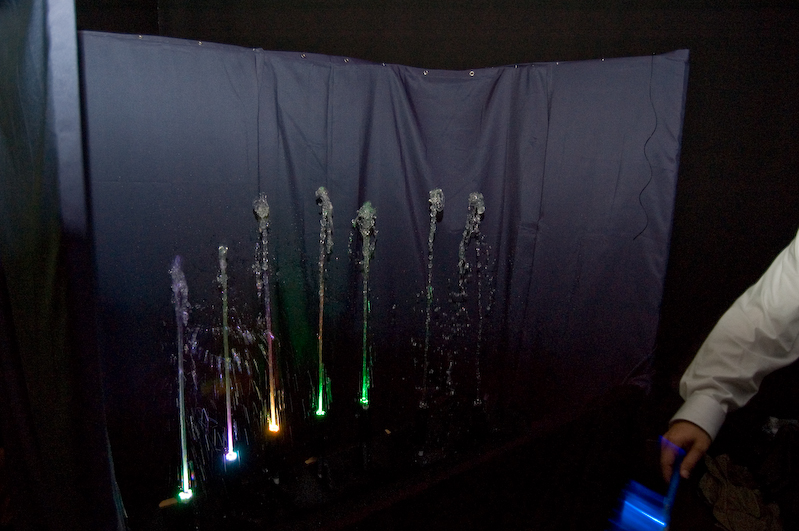

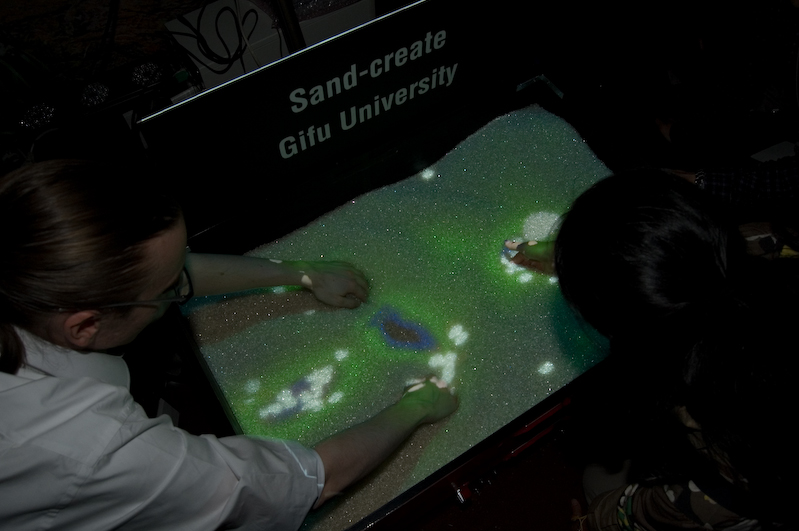

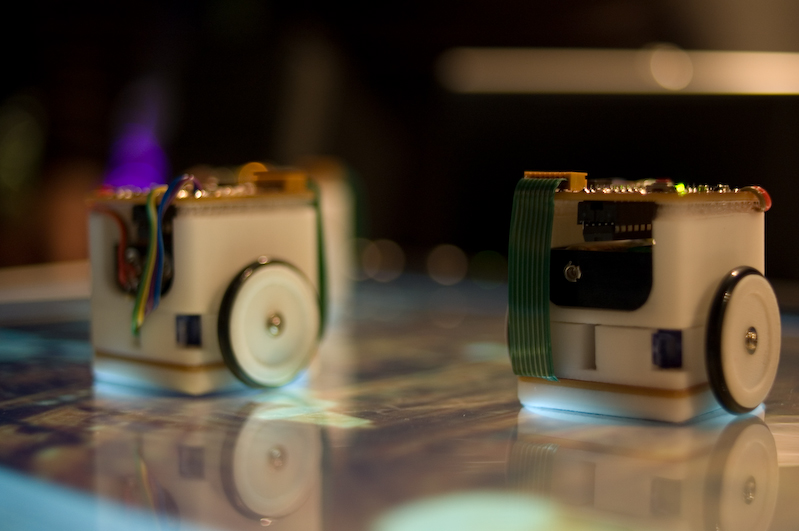

As usual, Japanese brought a touch of poetry; control water to create a visual symphony, build sand valleys, play music with your body, or watch tiny dancing robots.

Hardware

This year saw the rise of optical (A.R.T, Vicon) tracking, inertial tracking (XSens, eMagin Z800, Wiimote), or both (Worldviz PPT). I didn’t see any magnetic tracking.

XSens had an impressive demo where a man with a suit filled with their inertial (gyroscopes, accelerometers, magnetometers) trackers was walking all around the show. You could see his 3d body in realtime on their booth. They claim a very low drift. I wrote the driver for Virtools and must admit I was quite impressed by this tracker.

There was hardly one autostereoscopic screen, and mostly passive stereoscopy.

There was not many new hardware; Haption demonstrated their new “cheap” haptics device, the Virtuose 6D Desktop (which got an award). This is a more professional haptics device than the Falcon, which only has 3DOF (ie only translations)

Crescent demonstrated their new HMD, the HEWDD-768. The specs are pretty impressive : 120° of full stereoscopy, 2x1280x768. My first impression is that it’s heavy and cumbersome (as you can see on the picture), with less luminosity and sharpness than a LCD or OLED display. It uses retroprojection with a system I didn’t fully understand. But the field of view was impressive, and it doesn’t suffer from the Sensics optics problem. Announced price : 50’000€.

Barco demonstrated an interesting stereoscopic hardware, where two people can have their own stereoscopic perspective by using a combination of polarized and Infitec technology. This allows two viewers to have their head tracked and get a correct view for each, which is generally not possible. Generally only one viewer can have a correct perspective. But I don’t like the way Infitec modifies the colours, which are thus a bit different for each eye. This is particularly striking on some red and green tints.

The eMagin Z800 was the most used HMD on the show. People must have got it before the price increase 😉 I can only hope that they’ll work on the drift of their gyroscope; you have to recalibrate the HMD every two minutes or people would break their neck trying to compensate the drift. The Verdun installation shows that the Z800 is good enough to be fully immersed provided that the content is good. (I know that they did a lot of manual recalibration during each run).

The wiimote was also widely used, mostly in unusable and/or frustrating ways. Just because you’re using the wiimote won’t make your application cool and easy to use. Nobody used the IR bar, so there was only simple motion analysis, nothing precise. The sword simulator was particularly disappointing; you couldn’t point precisely at where you wanted to attack. You could only just do rough movements and hope that the AI wouldn’t block your attack. Afterwards I saw that a lot of effort had been put into that simulator, but the way it was presented on the show didn’t help it reach its potential, or at least have me want to take time to understand it. Maybe with better explanations, like a 1 minute tutorial and videos on what can be expected would help.

– Wheelchair Simulator (C) David Ademas –

Nautilus, Kawaii Menee and students each developed their own physical interfaces with simple electronics components to create a train simulator, a bike simulator and a wheelchair simulator. This shows that specialized installations can be done with cheap components and a bit of ingeniosity. A lot of cheap interface boards exist to plug sliders and buttons to a PC (Arduino for example).

Lots of applications used a simple webcam and image processing as an input device.

There wasn’t much augmented reality applications, except for the popular yet simple Total Immersion demo. The topic was more discussed in the conferences.

Conclusion

My opinion is that we don’t use the full potential of existing hardware. There is lots of room for improvement on the applications side, and a lot of things can be achieved with the current hardware; we need better graphics, better interactivity, better use of hardware, and above all better immersion. Immersion is a fragile and complex thing. A lot of work has to be done by application designers, and the slightest detail can break it. Using a headphone is pretty smart to avoid being disturbed by the real life. Therapists even talk to their patient using a microphone to get more immersion.

Don’t use a device because it’s cool, use a device because it suits your application and can be used easily, almost forgotten.

Good graphics are essential too; I don’t mean realism, I don’t mean next-gen graphics. I mean graphics that won’t distract you because they’re not pretty enough. There are lots of talented graphists, don’t just create a team of programmers (this is especially true for some games that were presented at the student competition).

Keep it simple; the current state of 3d interactivity is not yet advanced enough. If you try to do some complex interactions you’ll probably end up with something totally unusable. Remember the beginnings of mouse and windows interactions. We’re at the very beginning of 3d interactions so let’s try to find simple things that work (and there aren’t much yet..) rather than complex things that don’t work. As I said, maybe if Verdun had some interaction it wouldn’t have worked so well.

New companies are being created and gain experience, which shows that the market is growing and getting more mature, although we’re still at the very beginning. I think installation prices are also dropping with a smarter use of existing hardware and more competition.

So see you in one year to see how all this is evolving 😉

– David experiencing the Khufu/Kheops application in the SASCube after only 3 mins of setup –

Thanks for the feedback !

Hi,

I’m really glad that the Verdun experience had so much success.

The jury of Ars Electronica, a few years ago – 1998, also rewarded a virtual experience arround the reminiscence of war, named Worldskin, by Maurice Benayoun. It was and maybe is still running in the Linz Cave.

Those 2 VR experience have in common the fact that they immerse the visitor(s) in an evocation of the war that is not realisticaly looking, but, in both case, have a great power in creating very strong emotions. This is where VR definitively sensational. Cheers

Check-out Worldskin here:

http://www.youtube.com/watch?v=idOwCf03jmg

http://www.benayoun.com/worldskin.html

http://www.z-a.net/worldskin/index.en.html

Here’s a link to the Verdun website :

http://www.time-machine.info

they have some pictures of the world.

Hi !

I’m very happy to see you enjoyed “Verdun – 1916″ application ^^. I’m one of ESCIN’s students (Alex gave me your blog’s URL). I hope my future works have same success (but in reality without Erik Geslin, it will be hard!!! :p).

Thx to you for this article!

And a nice video : http://www.dailymotion.com/video/x20slj_laval-virtual-2007

(through regarde.org)